AGIBOT today introduced GO-2, its next-generation foundation model for embodied AI. The company said GO-2 bridges the “last mile” from logical reasoning to precise execution within a unified architecture.

Building on its predecessor, GO-1, GO-2 introduces a unified architecture that integrates logical reasoning and action execution within a single system. This enables AI robots not only to plan correctly but also to execute reliably in real-world environments, said AGIBOT.

GO-2 brings together tens of thousands of hours of interaction data, claimed the company, marking a transition from “black-box exploration” to a “true unity of reasoning and action.”

GO Series evolves from perception to actuation

A year ago, AGIBOT released the Genie Operator-1 (GO-1) foundation model. Featuring the ViLLA architecture, it unified modeling of vision, language, and action. Today, AGIBOT integrated the model into its one-stop embodied development platform, Genie Studio, empowering users to deploy models and validate them in large-scale real-world applications.

GO-1 taught robots to “understand.” It could interpret instructions, recognize scenes, and plan tasks, said the company. However, as systems entered more complex real-world environments, a critical issue emerged: even with a reasonable plan, the robot’s actions did not always strictly adhere to it.

This is not a failure of planning; it is a fracture between reasoning and execution, asserted AGIBOT. It said the core cause is a long-standing challenge in robotics: the “semantic-actuation gap.”

In traditional vision-language-action (VLA) models, the high-level reasoning signals and real-world motor commands remain disconnected. During execution, control modules often bypass reasoning signals, leading to accumulated errors in long-horizon tasks and decreased system stability, noted the Shangha-based company.

GO-2 achieves ‘unity of reasoning and action’

To achieve the unity of reasoning and action, said AGIBOT, a system must solve two key problems simultaneously:

- How to generate “executable” action plans through deep spatial reasoning

- How to ensure stable execution of those plans in real environments

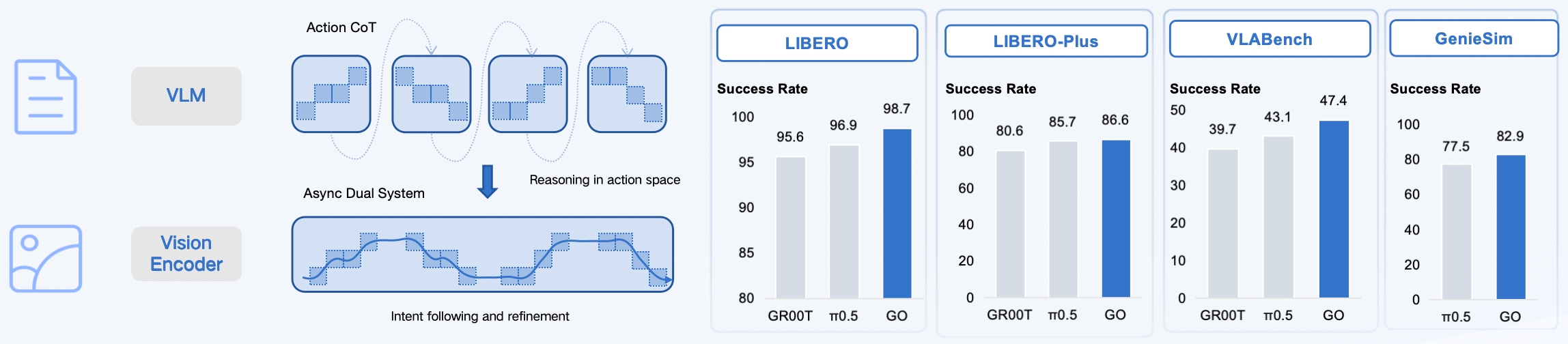

AGIBOT said it addresses these through an architecture built on two innovations. The first is action chain of thought. Unlike traditional models that map instructions directly to raw motor commands, GO-2 generates a high-level sequence of action intents as a macro plan.

Similar to how a human mentally simulates the arc of a basketball shot before releasing the ball, GO-2 makes this process explicit. Through action-level reasoning, the robot plans a complete behavioral path and executes it step by step. Complex tasks are naturally decomposed into ordered stages, ensuring that execution is built upon a foundation of clear, logical reasoning, explained AGIBOT.

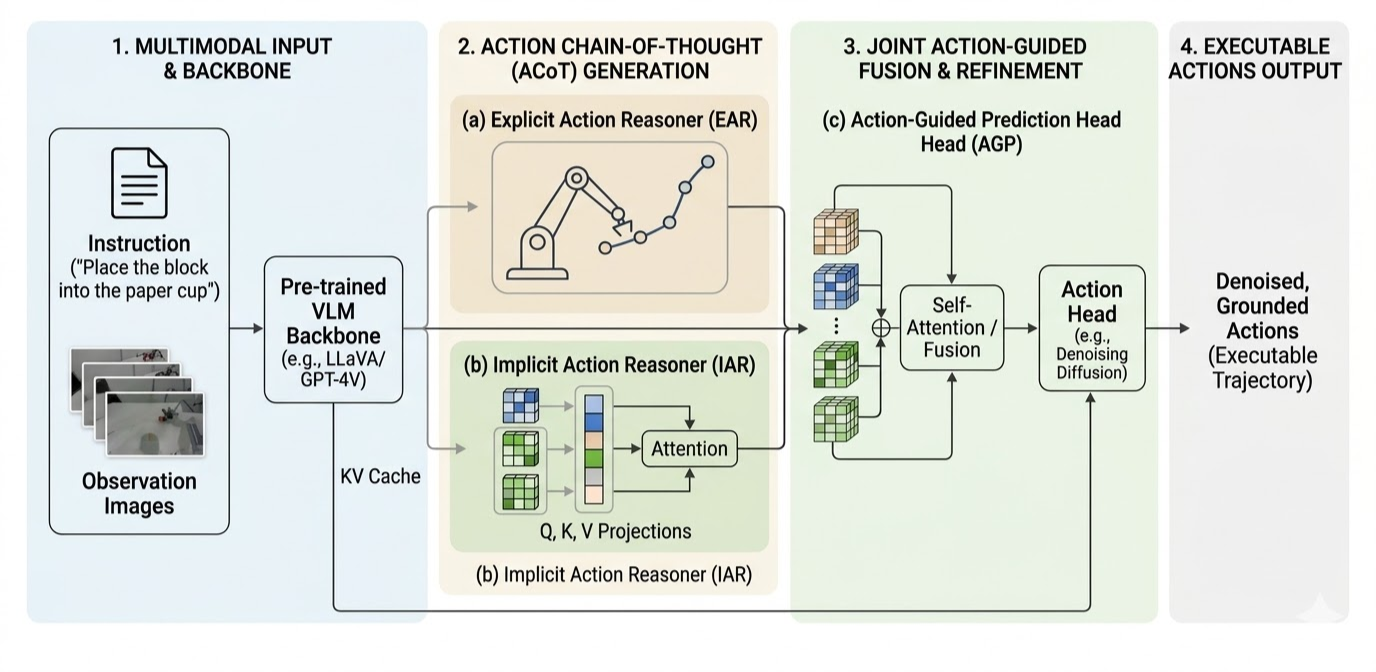

The second is asynchronous dual-system low-frequency planning, high-frequency following. The company said high-level reasoning alone cannot guarantee stable execution in real-world environments filled with noise and disturbances.

To solve this, GO-2 introduces an Asynchronous Dual-System architecture to translate high-level reasoning into precise robotic movements. A semantic planning module operates at a lower frequency, acting as a “general commander.” This module generates structured high-level action sequences. These are presented through progressive refinement, ensuring that the reasoning itself is inherently “executable,” providing stable geometric anchors for control.

An action-following module, on the other hand, operates at a higher frequency. This acts as an “agile executor” that continuously receives high-level intents and combines them with real-time observations to generate specific control signals, performing residual refinement to compensate for environmental noise.

AGIBOT said these two systems are deeply aligned. To ensure that execution strictly adheres to reasoning, GO-2 uses a teacher-forcing mechanism during training. It teaches the model to perform robustly even under “approximately correct but imperfect” reasoning conditions.

GO-2 performs across different benchmarks

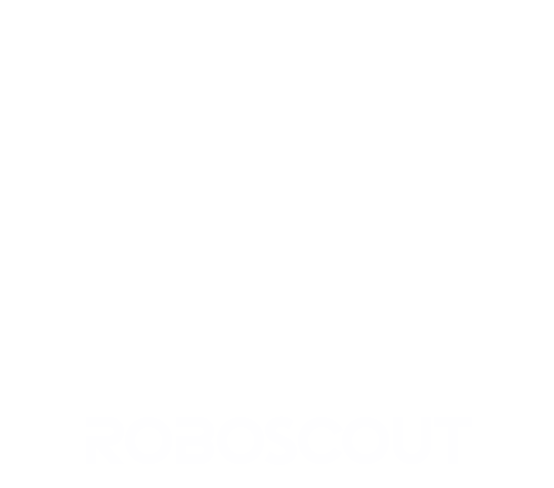

By bridging reasoning and action, AGIBOT said GO-2 achieves “a paradigm shift” in behavioral performance, significantly outperforming current mainstream models like π0.5 and NVIDIA GR00T:

- LIBERO benchmark: GO-2 ranks first across spatial, object, goal, and long tasks, with an average success rate of 98.5%.

- LIBERO-Plus benchmark: In environments with various disturbances, GO-2 achieved an 86.6% zero-shot success rate.

- VLABench benchmark: In rigorous tests for cross-category and texture generalization, GO-2 achieved an average score of 47.4, notably outperforming existing methods in handling diverse object textures and unseen categories.

- Genie Sim 3.0 (Sim-to-Real): Trained solely on simulation data, GO-2 achieved an 82.9% success rate in real-world testing.

From model to deployment: enabling continuous learning in the real world

Beyond model performance, AGIBOT said it is extending GO-2 into real-world deployment through a pre-training + post-training + data feedback loop paradigm. Integrated with Genie Studio, the system enables:

- Continuous data collection across fleets of robots

- Cloud-based collaborative training

- Online post-training in real-world environments

This infrastructure supports large-scale deployment and ongoing improvement, said the company. It can support thousands of robots in distributed training and achieve around 10 times improvement in training efficiency.

The model can also reduce task startup time to just minutes, enable minute-level convergence in industrial tasks, and improves rates by two to four times while reducing data requirements by over 50%.

This transforms GO-2 from a static model into a continuously evolving embodied system, according to AGIBOT.

Editor’s note: At the 2026 Robotics Summit & Expo on May 27 and 28 in Boston, there will be sessions on embodied and physical AI. Registration is now open.

AGIBOT moves toward embodied agents with memory

Beyond stable execution, AgiBot is exploring the next frontier: can robots remember and become smarter over time?

Its latest research introduces the OpenClaw Memory System (arXiv:2603.11558), providing robots with long-term memory to reuse reasoning traces from historical interactions.

By combining action reasoning, hierarchical execution, and long-term memory, AGIBOT said it hopes to form a complete intelligent loop: from perception to reasoning to action to memory.

The post AGIBOT releases GO-2 foundation model for embodied AI appeared first on The Robot Report.