AGIBOT’s robot has a dexterous design to collect and use data. Source: AGIBOT

As robotics research moves beyond controlled lab settings into real-world environments, the demand for large-scale, high-quality data has become increasingly critical, according to AGIBOT. The company today released AGIBOT WORLD 2026, an open-source heterogeneous dataset it said is designed to systematically support five key research pathways in embodied intelligence.

“The dataset features structured, high-quality, and precisely annotated real-world robot data, providing developers and researchers with a robust foundation for training next-generation embodied AI systems,” said AGIBOT.

Editor’s note: At the 2026 Robotics Summit & Expo on May 27 and 28 in Boston, there will be sessions on embodied and physical AI, as well as on humanoid robot development. Registration is now open.

AGIBOT WORLD follows free-form data-collection strategy

AGIBOT WORLD 2026 spans a wide range of real-world environments, including commercial spaces, homes, and everyday scenarios. AGIBOT said this captures the complexity, variability, and unpredictability that robots must handle in practice.

Unlike conventional datasets built on repetitive and scripted demonstrations, the Shanghai-based company has taken free-form data-collection approach, in which teleoperators dynamically perform tasks based on real-time conditions.

It claimed that this strategy can significantly enhance diversity within each episode and improve generalization across multiple dimensions, including object categories, initial configurations, and task execution sequences. AGIBOT said its robot uses a flexible wheeled base, articulated head and waist movements, and lift-pitch capabilities for efficient, natural, and highly transferable data collection.

In parallel, AGIBOT constructs 1:1 digital twin environments in simulation, with all corresponding simulation data released alongside the real-world dataset

AGIBOT says free-form data collection ensures comprehensive generalization. Source: AGIBOT

Innovations bridge the gap between data, real robot behavior

“A fundamental question in embodied AI remains: Does the data truly reflect how a robot operates as an integrated system?” noted AGIBOT. To address this, the company has introduced several features:

- Whole-body control (WBC): This enables coordinated control of arms, waist, and hands, allowing robots to perform tasks more fluidly as a unified system rather than through isolated motions.

- First-person beyond-visual-range teleoperation: The robot’s perception is aligned with that of the operator, enabling more intuitive, continuous, and transferable control.

- Force-controlled data collection: AGIBOT said it has incorporated contact dynamics and force feedback, capturing not only motion trajectories, but also real physical interactions.

“Together, these capabilities ensure that the dataset more accurately represents real-world robot behavior,” asserted the company.

Whole-body control allows for smooth movement of the entire robot. Source: AGIBOT

Industrial-grade hardware feeds the data pipeline

AGIBOT explained that the dataset is collected on its G2 hardware platform, which integrates high-performance joint actuators, multi-modal sensors, and a high-performance domain controller to support precise force control and scalable development.

Equipped with Zhixing 90D grippers and the dexterous OmniHand, the G2 captures synchronized multi-modal data—including RGB(D), tactile signals, lidar point clouds, IMU data, and full-body joint states—within a unified pipeline.

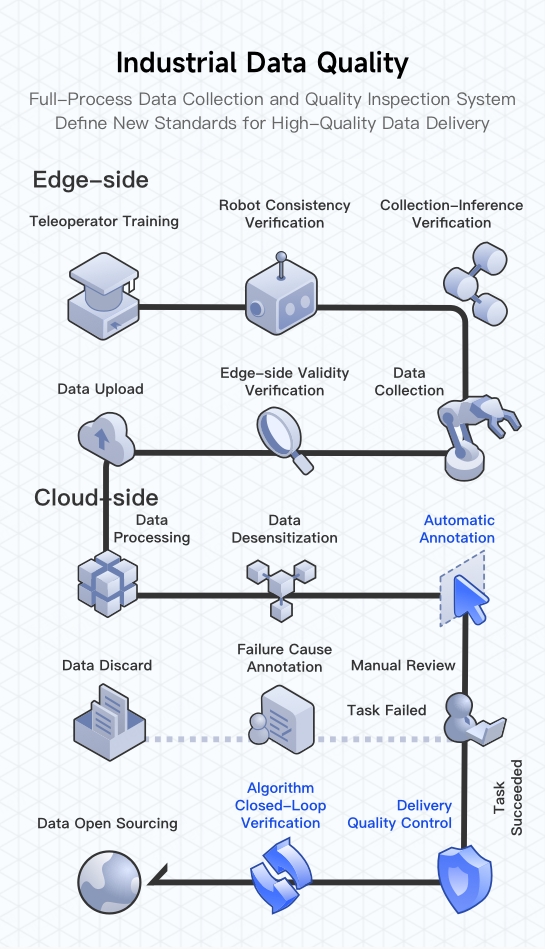

AGIBOT added that each data episode undergoes rigorous cleaning and validation through its “industrial-grade” data-processing system, ensuring readiness for large-scale model training and research applications.

Source: AGIBOT

Phase 1 release: Imitation learning

The company said it plans to release AGIBOT WORLD 2026 in five phases, each aligned with a core research direction in embodied intelligence.

The first release focuses on imitation learning, a key paradigm that enables robots to acquire complex physical skills from expert demonstrations. This phase includes hundreds of hours of real-world data collected primarily in commercial and service environments. The dataset combines:

- Task-level descriptions (segment-level instructions)

- Action sequences (step-by-step execution)

- Atomic skill labels (e.g., pull, place)

- Object annotations (2D bounding boxes and attributes such as name and color)

“Importantly, error-recovery trajectories are also retained and annotated,” said AGIBOT. “This hierarchical annotation framework—spanning from high-level tasks to low-level actions—provides the fidelity and corrective priors needed to train more robust and adaptive embodied agents.”

AGIBOT WORLD 2026 uses a hierarchical annotation framework. Source: AGIBOT

AGIBOT WORLD part of a long-term commitment to embodied AI ecosystem

AGIBOT said it is among a small group of startups taking a long-term, infrastructure-driven approach to embodied intelligence.

Recognizing early that high-quality data is foundational to unlocking the next generation of robotic capabilities, the company has consistently open-sourced million-scale real-world and simulation datasets.

AGIBOT said this effort reflects its broader goal in embodied intelligence: to democratize access to high-quality robot data. Through the continued evolution of the AGIBOT WORLD ecosystem, the company aims to contribute to the global robotics community and accelerate the transition of embodied AI from research labs into real-world applications.

AGIBOT WORLD 2026 trains on real-world scenarios. Source: AGIBOT

The post AGIBOT WORLD 2026 dataset is open-source to accelerate embodied AI development appeared first on The Robot Report.